Graphical model

Machine learning and data mining |

|---|

|

Problems

|

Supervised learning .mw-parser-output .nobold{font-weight:normal} (classification • regression)

|

Clustering

|

Dimensionality reduction

|

Structured prediction

|

Anomaly detection

|

Artificial neural networks

|

Reinforcement learning

|

Theory

|

Machine-learning venues

|

Glossary of artificial intelligence

|

Related articles

|

|

This article includes a list of references, but its sources remain unclear because it has insufficient inline citations. (May 2017) (Learn how and when to remove this template message) |

A graphical model or probabilistic graphical model (PGM) or structured probabilistic model is a probabilistic model for which a graph expresses the conditional dependence structure between random variables. They are commonly used in probability theory, statistics—particularly Bayesian statistics—and machine learning.

An example of a graphical model. Each arrow indicates a dependency. In this example: D depends on A, D depends on B, D depends on C, C depends on B, and C depends on D.

Contents

1 Types of graphical models

1.1 Bayesian network

1.2 Other types

2 Applications

3 See also

4 Notes

5 Further reading

5.1 Books and book chapters

5.2 Journal articles

5.3 Other

6 External links

Types of graphical models

Generally, probabilistic graphical models use a graph-based representation as the foundation for encoding a distribution over a multi-dimensional space and a graph that is a compact or factorized representation of a set of independences that hold in the specific distribution. Two branches of graphical representations of distributions are commonly used, namely, Bayesian networks and Markov random fields. Both families encompass the properties of factorization and independences, but they differ in the set of independences they can encode and the factorization of the distribution that they induce.[1]

Bayesian network

If the network structure of the model is a directed acyclic graph, the model represents a factorization of the joint probability of all random variables. More precisely, if the events are X1,…,Xn{displaystyle X_{1},ldots ,X_{n}}

- P[X1,…,Xn]=∏i=1nP[Xi|pai]{displaystyle P[X_{1},ldots ,X_{n}]=prod _{i=1}^{n}P[X_{i}|pa_{i}]}

where pai{displaystyle pa_{i}}

with a joint probability density that factors as

- P[A,B,C,D]=P[A]⋅P[B]⋅P[C,D|A,B]{displaystyle P[A,B,C,D]=P[A]cdot P[B]cdot P[C,D|A,B]}

Any two nodes are conditionally independent given the values of their parents. In general, any two sets of nodes are conditionally independent given a third set if a criterion called d-separation holds in the graph. Local independences and global independences are equivalent in Bayesian networks.

This type of graphical model is known as a directed graphical model, Bayesian network, or belief network. Classic machine learning models like hidden Markov models, neural networks and newer models such as variable-order Markov models can be considered special cases of Bayesian networks.

Other types

- A factor graph is an undirected bipartite graph connecting variables and factors. Each factor represents a function over the variables it is connected to. This is a helpful representation for understanding and implementing belief propagation.

- A clique tree or junction tree is a tree of cliques, used in the junction tree algorithm.

- A chain graph is a graph which may have both directed and undirected edges, but without any directed cycles (i.e. if we start at any vertex and move along the graph respecting the directions of any arrows, we cannot return to the vertex we started from if we have passed an arrow). Both directed acyclic graphs and undirected graphs are special cases of chain graphs, which can therefore provide a way of unifying and generalizing Bayesian and Markov networks.[2]

- An ancestral graph is a further extension, having directed, bidirected and undirected edges.[3]

Random field techniques

- A Markov random field, also known as a Markov network, is a model over an undirected graph. A graphical model with many repeated subunits can be represented with plate notation.

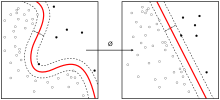

- A conditional random field is a discriminative model specified over an undirected graph.

- A restricted Boltzmann machine is a bipartite generative model specified over an undirected graph.

Applications

The framework of the models, which provides algorithms for discovering and analyzing structure in complex distributions to describe them succinctly and extract the unstructured information, allows them to be constructed and utilized effectively.[1] Applications of graphical models include causal inference, information extraction, speech recognition, computer vision, decoding of low-density parity-check codes, modeling of gene regulatory networks, gene finding and diagnosis of diseases, and graphical models for protein structure.

See also

- Belief propagation

- Structural equation model

Notes

^ ab Koller, D.; Friedman, N. (2009). Probabilistic Graphical Models. Massachusetts: MIT Press. p. 1208. ISBN 0-262-01319-3..mw-parser-output cite.citation{font-style:inherit}.mw-parser-output q{quotes:"""""""'""'"}.mw-parser-output code.cs1-code{color:inherit;background:inherit;border:inherit;padding:inherit}.mw-parser-output .cs1-lock-free a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/6/65/Lock-green.svg/9px-Lock-green.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .cs1-lock-limited a,.mw-parser-output .cs1-lock-registration a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/d/d6/Lock-gray-alt-2.svg/9px-Lock-gray-alt-2.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .cs1-lock-subscription a{background:url("//upload.wikimedia.org/wikipedia/commons/thumb/a/aa/Lock-red-alt-2.svg/9px-Lock-red-alt-2.svg.png")no-repeat;background-position:right .1em center}.mw-parser-output .cs1-subscription,.mw-parser-output .cs1-registration{color:#555}.mw-parser-output .cs1-subscription span,.mw-parser-output .cs1-registration span{border-bottom:1px dotted;cursor:help}.mw-parser-output .cs1-hidden-error{display:none;font-size:100%}.mw-parser-output .cs1-visible-error{font-size:100%}.mw-parser-output .cs1-subscription,.mw-parser-output .cs1-registration,.mw-parser-output .cs1-format{font-size:95%}.mw-parser-output .cs1-kern-left,.mw-parser-output .cs1-kern-wl-left{padding-left:0.2em}.mw-parser-output .cs1-kern-right,.mw-parser-output .cs1-kern-wl-right{padding-right:0.2em}

^ Frydenberg, Morten (1990). "The Chain Graph Markov Property". Scandinavian Journal of Statistics. 17 (4): 333–353. JSTOR 4616181. MR 1096723.

^ Richardson, Thomas; Spirtes, Peter (2002). "Ancestral graph Markov models". Annals of Statistics. 30 (4): 962–1030. CiteSeerX 10.1.1.33.4906. doi:10.1214/aos/1031689015. MR 1926166. Zbl 1033.60008.

Further reading

Books and book chapters

Barber, David (2012). Bayesian Reasoning and Machine Learning. Cambridge University Press. ISBN 978-0-521-51814-7.

Bishop, Christopher M. (2006). "Chapter 8. Graphical Models" (PDF). Pattern Recognition and Machine Learning. Springer. pp. 359–422. ISBN 0-387-31073-8. MR 2247587.

Cowell, Robert G.; Dawid, A. Philip; Lauritzen, Steffen L.; Spiegelhalter, David J. (1999). Probabilistic networks and expert systems. Berlin: Springer. ISBN 0-387-98767-3. MR 1697175. A more advanced and statistically oriented book

Jensen, Finn (1996). An introduction to Bayesian networks. Berlin: Springer. ISBN 0-387-91502-8.

Pearl, Judea (1988). Probabilistic Reasoning in Intelligent Systems (2nd revised ed.). San Mateo, CA: Morgan Kaufmann. ISBN 1-55860-479-0. MR 0965765. A computational reasoning approach, where the relationships between graphs and probabilities were formally introduced.

Journal articles

Edoardo M. Airoldi (2007). "Getting Started in Probabilistic Graphical Models". PLoS Computational Biology. 3 (12): e252. doi:10.1371/journal.pcbi.0030252. PMC 2134967. PMID 18069887.

Jordan, M. I. (2004). "Graphical Models". Statistical Science. 19: 140–155. doi:10.1214/088342304000000026.

Ghahramani, Zoubin (May 2015). "Probabilistic machine learning and artificial intelligence". Nature. 521: 452–459. doi:10.1038/nature14541.

Other

- Heckerman's Bayes Net Learning Tutorial

- A Brief Introduction to Graphical Models and Bayesian Networks

- Sargur Srihari's lecture slides on probabilistic graphical models

External links

- Graphical models and Conditional Random Fields

- Probabilistic Graphical Models taught by Eric Xing at CMU

![P[X_{1},ldots ,X_{n}]=prod _{i=1}^{n}P[X_{i}|pa_{i}]](https://wikimedia.org/api/rest_v1/media/math/render/svg/808bf6d04a7de6987fe9d73f64871144bac4b730)

![{displaystyle P[A,B,C,D]=P[A]cdot P[B]cdot P[C,D|A,B]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/646977af1489b1ac6bbe1b764335f9361c93ce48)

Comments

Post a Comment